This post is part of a series “The Quest for General Artificial Intelligence”. To access the index for this series click here.

Actions are a fundamental element of the RL model. Without them the Agent would not be able to selectively reap the rewards nor would be capable to explore the Environment. As a first step, we will represent the actions not with the term used in the classical RL, but with

to explicitly indicate that the action is an expected one, that might be subject to interference. One such type of interference could be provided by the Environment as clearly illustrated by the Frozen Lake problem in [1]. Clearly, this type of stochasticity can be handled by the classical RL model and the learned policy can account for the random nature of the external actions.

But there is another stochasticity that is currently poorly considered, sometimes with catastrophically results: the change in performance of the Agent’s actuators or augmentation of Agent’s structure (ex. through the use of tools). At present these type of changes simply result in erroneous or even self-destructive behaviour.

In the classical RL the action is represented as the result of the policy execution for a given state:

In this case the external rewards are reflected in the structure of during learning and therefore there are not explicitly needed in the determination of the action. In the GRL model, the

will have to be replaced with

and, since the rewards are continuously updated we will include them as a parameter. So, initially we would have:

In order to account for the variations in the expected actions and the actual interaction between the Agent and Environment we need to provide the Agent with proprioception. We will represent this as a function of the expected actions from last time and the current observations of the Environment:

Now we can include this measure of proprioception into the previous formula as follows:

We argue that this approach will be able to account for changes in the proprio-structure, a feature that is subject to significant interest in recent years. In [2] Kwiatkoswski and Lipson presents a model that allows an actual robot to account for actuators dysfunctions or for changes in the physical structure of the robot.

An even more interesting property that the proprioception function would implement is the ability to reflect the impact of using tools. It is undisputed today that the use of tools has contributed significantly to the evolution of human cortex and the relation between the two is reinforcing. A proper model for General AI would need to consider the ability of using tools and incorporating them into the Agnet’s model.

Ever since the Iriki, Tanaka and Iwamura work [3] there has been an increasing body of research that confirms that tool usage impacts the personal space representation. In [4] Martel also argues that it also impacts the body representation. Tools are not external instruments that are used as intermediaries in the interaction with the Environment, they become part of the Agent.

Example 1:

For instance the end effector when using a pen is not the group of fingers holding the pen (as the most remote body part interacting), but the actual tip of the pen. The Agent actually feels the tip of the pen as it slides on the paper.

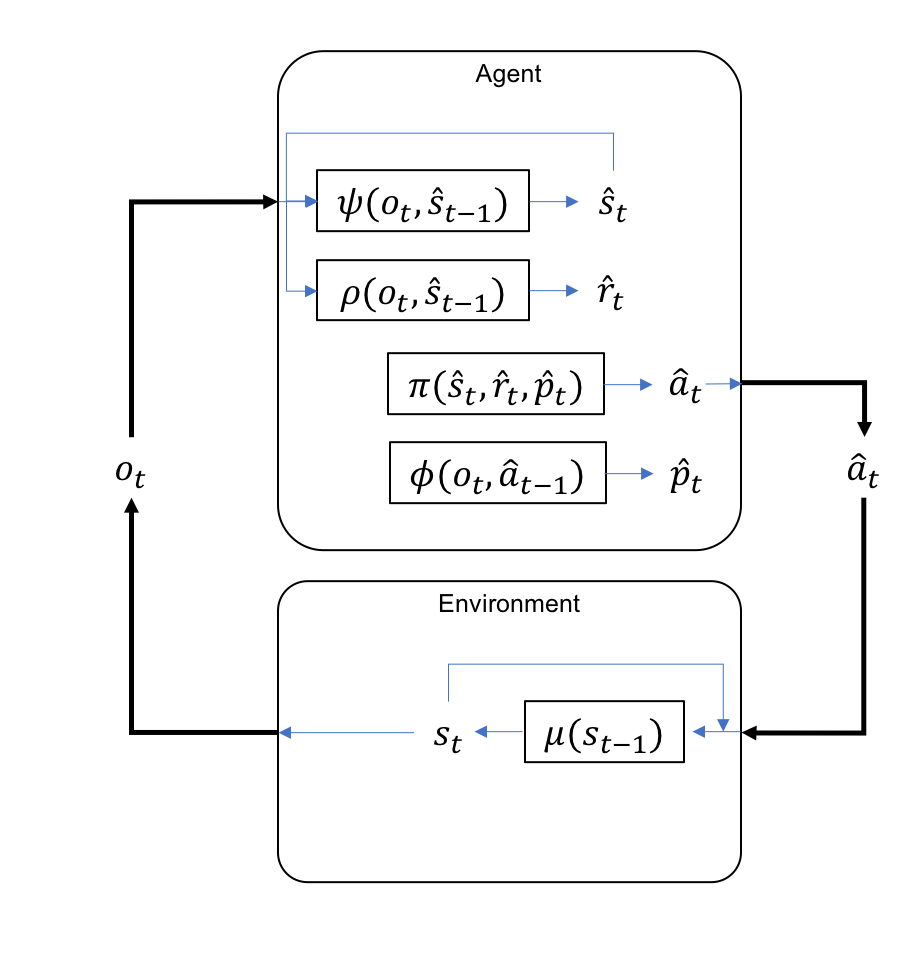

We can now complete the diagram of the GRL model by including the additional functions, and

as well as closing the loop between the Agent and Environment with the action

:

References

[1] Richard S. Sutton and Andrew G. Barto. Reinforcement Learning: An Introduction. Adaptive Computation and Machine Learning. Cambridge, Mass: MIT Press, 1998. 322 pp. isbn: 978-0-262-19398-6

[2] Robert Kwiatkowski and Hod Lipson. “Task- Agnostic Self-Modeling Machines”. In: Science Robotics 4.26 (Jan. 30, 2019), eaau9354. issn: 2470- 9476. doi: 10.1126/scirobotics.aau9354. url:http://robotics.sciencemag.org/lookup/doi/10.1126/scirobotics.aau9354.

[3] A Iriki, Michio Tanaka, and Yoshiaki Iwamura. “Coding of Modified Body Schema During Tool Use by Macaque Postcentral Neurones”. In: Neuroreport 7 (Nov. 1, 1996), pp. 2325–30. doi: 10. 1097/00001756-199610020-00010.

[4] Marie Martel et al. “Tool-Use: An Open Window into Body Representation and Its Plasticity”. In:Cognitive Neuropsychology 33.1-2 (Feb. 17, 2016), pp. 82–101. issn: 0264-3294, 1464-0627. doi: 10. 1080/02643294.2016.1167678. url: http://www.tandfonline.com/doi/full/10.1080/ 02643294.2016.1167678.

This post is part of a series “The Quest for General Artificial Intelligence”. To access the index for this series click here.